How to Spot Research Spin: The Case of the Not-So-Simple Abstract

Spin doctoring is deliberate manipulation. I don’t think everyday research spin is intended to deceive, though. Mostly it’s because researchers want to get attention for their work and so many others do it, it seems normal. Or they don’t know enough about minimizing bias. It takes a lot of effort to avoid bias in research reporting.

Regardless of why it’s done, the effect of research spin is the same. It presents a distorted picture. And when that collides with readers who don’t have enough time, inclination, or awareness to see the spin, people are misled. It’s one way strong beliefs keep being shored up by flimsy foundations.

A classic example of research spin kept flying through my Twitter feed this last week. The message was one that must have kicked a lot of confirmation bias dust into the eyes of people who pushed it along: more grist for the scientists-don’t-write-well mill. It’s a good example to discuss the mechanics of research reporting bias, because the topic (though not the paper) is easy to understand for anyone who reads scientific literature: the language used in abstracts in science journals. But a little background before we get to it.

Research spin is when findings are made to look stronger or more positive than is justified by the study. You can do it by distracting people from negative results or limitations by getting them to focus on the outcomes you want – or even completely leaving out results that mess up the message you want to send. You can use words to exaggerate claims beyond what data support – or to minimize inconvenient results.

It was ironic that this example was a study of abstracts. Abstracts evolved as marketing blurbs for papers – and they’re the mother lode of research spin. (More on that in this post.) Other than the title, it’s the part of a paper that will be read the most. And with some exceptions I’ll get to later, there’s not all that much guidance for how to do this critical research reporting step well.

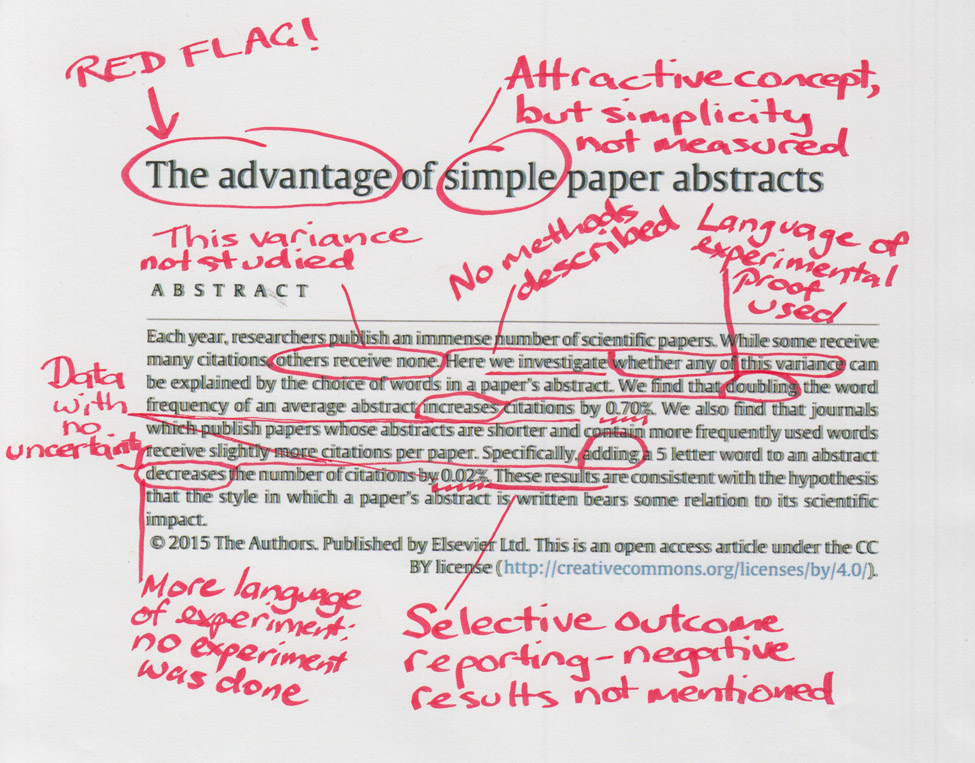

This is the study, by Adrian Letchford and colleagues. They conclude their statistical modeling data supports their hypothesis that papers with harder-to-understand abstracts get cited less. I’ve scribbled pointers to red flags for research spin on the title and abstract and I’ll flesh those out as we go. I, and most others in my Twitter circle I expect, first heard of this study because Retraction Watch decided this study was worth covering.*

Right from the title, it’s clear this is not an objective description of a research project. And that’s usually not a good sign. Out of the many analyses the authors report in the paper and its supplementary information, in the abstract they include only some that confirm their hypothesis – with no mention that there were some that don’t. For example, one analysis found that among the papers published in the same journal, the longer abstracts were associated with increased citations.

There’s no explanation in the abstract of what kind of study it was. But the language chosen sounds like an experimental study’s results. For example: “Doubling the word frequency of an average abstract increases citations by 0.70%”. There was no experiment of what happens when you double “word frequency” of an abstract. The data come from statistical modeling, with various assumptions and components. “Word frequency” here might not mean what you think it means. (It’s confusing: more on that later.)

Just what difference these numbers might mean is not put in any context. There’s just a very precise number, without showing how much uncertainty is associated with that result.

Let’s go to the authors’ aim and hypothesis. Did they investigate the reasons for variance between studies that have many citations and those that have none as the abstract implies? Could this study support the authors’ hypothesis and conclusions?

The first step is what’s known so far about whether citation rates are affected by the language and length of abstracts. The paper cites 2 previous studies: 1 was from 2013, and the authors found no impact of length of abstract. The other was from 2015, and those authors found that shorter abstracts were associated with fewer citations – except for mathematics and physics. Using more common and simpler words was also associated with fewer citations.

The first step is what’s known so far about whether citation rates are affected by the language and length of abstracts. The paper cites 2 previous studies: 1 was from 2013, and the authors found no impact of length of abstract. The other was from 2015, and those authors found that shorter abstracts were associated with fewer citations – except for mathematics and physics. Using more common and simpler words was also associated with fewer citations.

So from the paper itself, we already know that these authors’ conclusions conflict with other research. When I looked at the citations of that 2013 study in Google Scholar, I quickly found another 2 studies [PDF, PDF], and they had similar results. (There could be more.)

Why, then, did this group do their study – and without a thorough search for existing evidence? They write that they found the results of those 2 studies “surprising”, “in the light of evidence from psychological experiments” about reading. At some point in the process, they were “inspired by the idea that more frequently used words might require a lower cognitive load to understand”.

It’s hard to see the direct relevance to scientists’ work of this hypothesis. Most citations in the scientific literature should be by authors with a reasonable grasp of the subject they are writing about, and its jargon. If a title or abstract is not using the expected jargon, you could assume it could be overlooked. But unless a paper is truly undecipherable, why would we avoid citing papers with abstracts dense with jargon? (You might assume that journalists could gravitate more towards papers with abstracts they find easy to understand, but media attention is not part of the mechanism to increased citation these authors hypothesize.)

But that’s why they did it. So how did they go about it, and what were the risks of bias in their approach?

They took 10 years’ worth of science journal articles and citation data from the Web of Science. Because of limits to what can be downloaded, they took only the top 1% most highly cited papers from each year. There wasn’t a sample size calculation. (Presumably only English language abstracts were included.)

The 1%-ers were in around 3,000 journals a year. All journals with 10 or fewer papers in the top 1% in each year were excluded. That brought their sample down to 1%-ers concentrated in around 500 journals a year. (There was no data for papers that weren’t highly cited.)

What fields of science these journals come from wasn’t analyzed. The authors argue that factoring out journals in some analyses means “any effect of differences between fields is also accounted for”. No analysis was provided to support that. Journals with policies with very short word limits for abstracts (like Science) weren’t analyzed separately either.

And then they started doing lots of analyses and building successive models. There’s always a risk of pulling up flukes doing that, even though they used a statistical method to try to reduce the risk. How many analyses were planned beforehand wasn’t reported. (You can read more about why that’s important here.)

And then they started doing lots of analyses and building successive models. There’s always a risk of pulling up flukes doing that, even though they used a statistical method to try to reduce the risk. How many analyses were planned beforehand wasn’t reported. (You can read more about why that’s important here.)

For the final model that arrives at the numbers used in the abstract, data on authors are added out of the blue, with no explanation of why. By that point in the article, I found it hard not to wonder what other analyses had been run but not reported.

What counted as “simple” language across all these disciplines? How often abstracts used frequently used words in Google Books. That’s not the same as writing simply. And just because professional jargon isn’t in widespread use among book authors, doesn’t mean that the words aren’t in frequent use within a professional group.

Here’s the key bit that explains their use of “word frequency”:

We find that the average abstract contains words that occur 4.5 times per million words in the Google Ngram dataset. According to our model, doubling the median word frequency of an average abstract to 9 times per million words will increase the number of times it is cited by approximately 0.74% [sic]. If the average English word is approximately 5 letters long, then removing a word from an abstract increases the number of citations by 0.02% according to our model.

It’s not clear that the Google dataset shows us the simplest words. For example, “genetic” and “linear” appear far more frequently than words like “innovative”, “breakthrough”, or even “apple”. That doesn’t mean linear is a simpler word than apple. One of the purposes of jargon is precision: removing it while also using fewer words doesn’t necessarily make something simple to read either. And for much research, a big part of a good abstract is numbers and statistical terms.

Cody Weinberger and colleagues, who conducted the 2015 study referred to above, have very different recommendations. They conclude that writing with the jargon needed for articles to be retrieved is critical for citation:

An intriguing hypothesis is that scientists have different preferences for what they would like to read versus what they are going to cite. Despite the fact that anybody in their right mind would prefer to read short, simple, and well-written prose with few abstruse terms, when building an argument and writing a paper, the limiting step is the ability to find the right article.

Good communication is important. Obscure communication makes it harder to work out what researchers actually did – and that can push people into simply relying on the researchers’ claims. (The word frequency issue in this paper about abstracts is a good case in point!)

In clinical research, there’s a strong and growing set of standards helping us improve research reporting – including in our abstracts. See for example reporting standards for abstracts of clinical trials [PDF]. (More about the origins of organizing to improve research reporting from the brilliant Doug Altman here.) Many of us are also working to ensure that there are good descriptions of research and other jargon to help non-experts make sense of research (for example here).

Good science should be transparent. The writing and data presentation should be a clear window into it. Ultimately, though, methodologically strong research reporting that avoids spin is far more critical an issue for good science than the use of jargon in abstracts.

~~~~

Disclosure: I’m one of the authors of the research reporting guidelines for abstracts of systematic reviews. (Ironically, because of the journal it’s published in, has an abstract so short, it doesn’t meet standards for anything except brevity! Further disclosure: I’m an academic editor for that journal, but bear no responsibility for this policy.)

[Update on 5 April 2016] On 17 March I submitted a comment to Retraction Watch about serious errors and bias in their reporting of this study. The comment was rejected by moderators in its original form. After negotiation, a version of my comment was published. The original wording that I had to were “serious errors” and “bias”. There has been no correction of the Retraction Watch post.

Study Report, Study Reality, and the Gap in Between

There’s lots more about the history and quality of abstracts in this post:

Science in the Abstract: Don’t Judge a Study by its Cover

More detail about the history of raising the standard of reporting of clinical research from Doug Altman here.

The cartoons are my own (CC-NC-ND-SA license). (More cartoons at Statistically Funny and on Tumblr.)

I updated the cartoon to include Olive Jean Dunn, not Bonferroni on 20 March 2016: you can read why here.

* The thoughts Hilda Bastian expresses here at Absolutely Maybe are personal, and do not necessarily reflect the views of the National Institutes of Health or the U.S. Department of Health and Human Services.

Excellent post. You write:

“Mostly it’s because researchers want to get attention for their work and so many others do it, it seems normal.”

In fact, this hypothesis was recently confirmed by NPG reviewers after our complaint of hype:

http://bjoern.brembs.net/2015/04/nature-reviewers-endorse-hype/

What label should one use when the researchers’ conclusions contradict their own evidence?

http://www.ncbi.nlm.nih.gov/pubmed/26521770

Rehabilitative treatments for chronic fatigue syndrome: long-term follow-up from the PACE trial.

FINDINGS: There was little evidence of differences in outcomes between the randomised treatment groups at long-term follow-up.

INTERPRETATION: The beneficial effects of CBT and GET seen at 1 year were maintained at long-term follow-up a median of 2·5 years after randomisation.

In economics this situation is what is called an information asymmetry. One party to the transaction has information the other does not. That is, the authors know what they did and what they found (most of the time) – the reader does not. The authors also know it’s doubtful the reader has time to go through the entire study. Time is scarce for an active scholar.

This has been made worse by paywalls. It is easier to read an abstract than to get the entire article. In practice, those who read the actual article are the ones who are the most interested in the subject, but there are circumstances where they may not have access to the article itself. If you have an outside organization (such as the MRC) painting a rosy picture of the project that can be portrayed in the abstract – but not the article itself – temptation to doctor the abstract increases. And when important government policy decisions – or the decisions that can save insurance companies money – depend on the results of the study, temptation to doctor the abstract increases still more.

At this point, it would be logical to have a regulatory agency ensuring that the abstract and the article both say the same thing. That is where journals come in. Originally, academic journals represented scholars in a particular discipline. Reviewers from that discipline were tasked with ensuring – first and foremost – that the conclusions claimed for the article have actually been demonstrated to be accurate.

We certainly would expect that the more prestigious the journal, the more careful the editors would be to ensure that the conclusion and abstract actually follow from the study described in the body of the article.

Over time, however, this system has broken down. When it comes to the point that you cannot trust the journal editors to ensure the abstract and article say the same thing, then the market of ideas gets clunky, slowed down. Becomes more expensive, in the sense that it takes even MORE time to be able to demonstrate that a published article bears little relationship to what was claimed for it.

The authors do not have to be deliberately deceptive – they could simply be obtuse. If I see one more article where correlation is confused with causation, I may give up reading abstracts altogether. But such fallacies are more likely when they lead to a conclusion desired by external forces (government, insurance, Big Pharma). Even journal editors are not immune to prejudices that might allow them to skip the scrutiny required for all acceptances.

The bottom line, however, is that the main incentive to be honest is up to the particular researchers’ own sense of integrity, own values, own priorities. Sad to say, that seems to not be enough.

Applying the economics of information to this situation, we have to warn scholars that if you increase the cost of acquiring accurate information about a study, there will be fewer honest studies, period. The quality of scholarship will decline, period. Public trust in scholarship will decline, period.

No one wants that outcome.

What is the solution? Journals and professional organizations must do a better job of regulating the market of ideas – if they want their product to continue to be seen as high quality.

Along those lines, one can only applaud the purposes of the PLOS ONE journals – to allow access (thus making it harder to disguise what happened in the study with a dishonest abstract) and to require authors to release data sets to other researchers once they have finished whatever block of studies they were working on. Both actions decrease the costs of acquiring information, because the authors know that if they are cheating, it can be found out.

However, the impact of open publications and open data is only as strong as the degree to which it actually happens. The openness of PLOS ONE is only as strong as it is enforced.

When Nobel-Prize winning economist George Akerlof wrote about the consequences of information asymmetry in markets, his first example was used cars. We do not want professional journals to descend to the level of a used car lot. In the case of the PACE trials, efforts to keep scholars and other interested parties from evaluating the relationship between the data from the actual study – and results published in journals, online, and even in the MSM – have reduced expectations of quality in all research having to do with “chronic fatigue syndrome.”

We do not want the market for ideas to look like the market for used cars. Cheers to the open data movement and journals such as PLOS ONE for trying to turn the trend around.

Thanks, Björn! That’s a frustrating case you report, and I agree hyping of research results should be taken far more seriously. You refer to Mike Eisen on this in your post, and I agree with the statement you reference:

The problem at the scientist and journal level gets worse when journalists, instead of resisting and puncturing the hype, actually hype up the already-hyped results, as happened with the case in my post. The scientists, journals, and journalists often do it based on the same interests – the desire to get attention, for their own benefit. They are not always aware of it. But ignorance is no longer any excuse when the bias and errors in their research reporting are drawn to their attention.

Unfortunately, although I had a bit better outcome, I experienced a similar problem to you when I wrote to Retraction Watch. It was the source of the publicity the study in my post gained, quite some time after the study’s release. Richard van Noorden from Nature tweeted that they had passed on covering this study because of its obvious problems, which was the right thing to do in this case. (That, or criticism of it.) The Retraction Watch post hyped the study – and in doing so, added spin and error.

Here’s the update I added to my post about how that went: