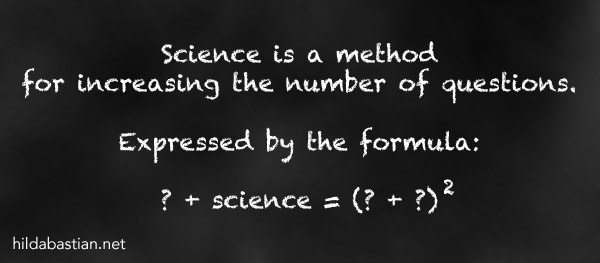

Science: A Method for Increasing the Number of Questions

A long time ago, I first encountered science seriously as a health consumer advocate. And I thought of medical and health research as a search for answers. Scientists were Popper’s problem-solvers, using rigorous testing to sort out the wheat of reliable answers from the chaff of the false leads.

But over time, as I watched the research pile up exponentially, the number of questions was zooming up even faster.

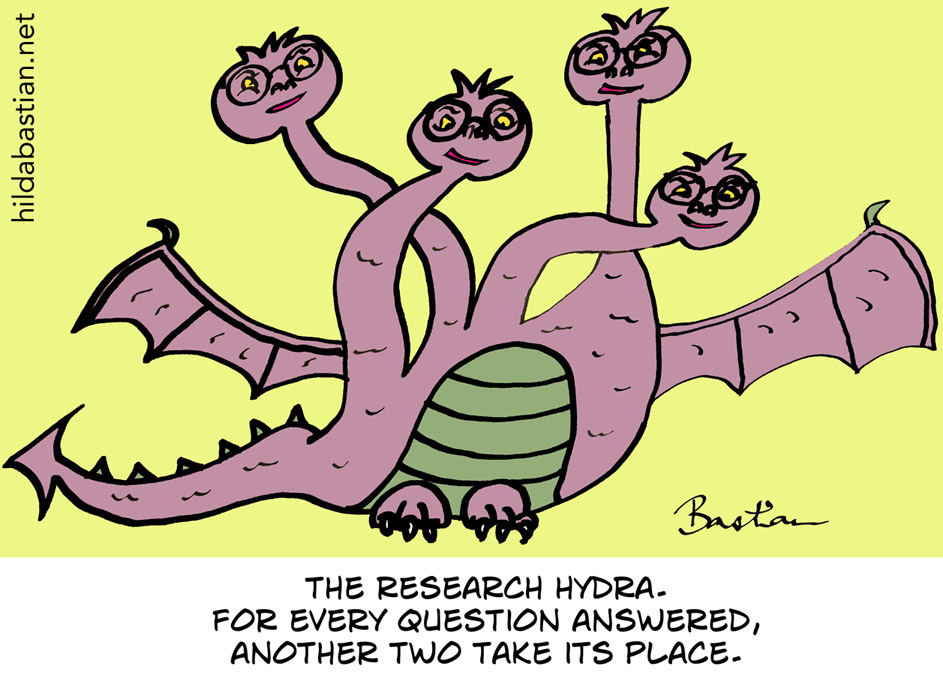

We find out treatment A works. Who – and what – else could it work for? Can you have half the dose and still get just as much benefit? Does double the dose do more good than harm? Will it work even better if you combine it with treatment B? Combine it with treatment C? Combine it with B and C? Is it better than old treatment Q? Will work it in gel form? In spray? . . . . .

Turns out scientific studies are, perhaps above all, a great way to generate more questions.

Once when I tweeted about this, David Norris replied with a pointer to a paper by physicist John R. Platt, on strong inference, arguing for the importance of multiple hypotheses as a driver of knowledge [PDF]. Platt is talking about the harm that becoming attached to a single hypothesis can cause. And I’m all in with him on that.

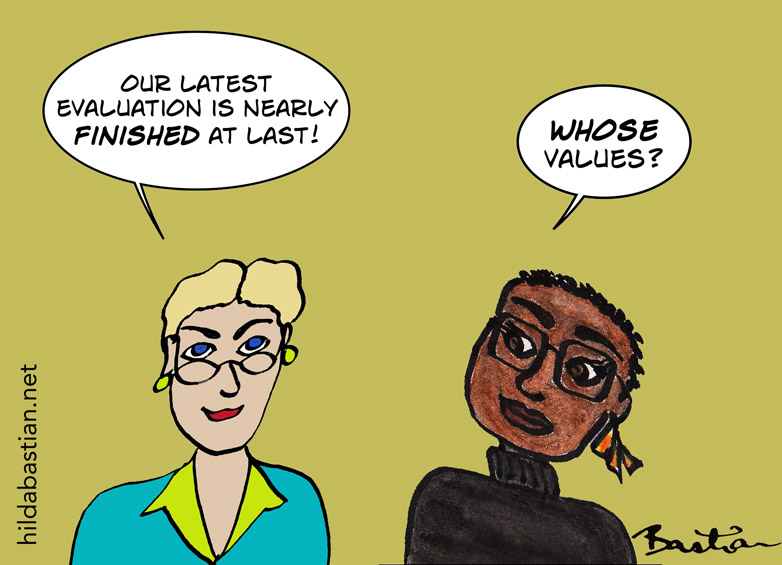

But his paper, published in 1964, is steeped in the dominant science culture of that time. It’s all “the quality or education of the men drawn into it [science]”, and “the man of one method”, and “the man to watch, the man to put your money on”. Wince! If questions are so important to how our knowledge grows – and they are – then the issue of who gets to ask those questions is a fundamental concern. It can have a profound impact on what questions get addressed at all, and what is seen.

The consequences of so much science having been shaped by one gender and one race and just a few countries keeps coming up. But there are a lot of other icebergs out there, too. A recent paper by Keith Stanovich and Maggie Toplak showed another – on the subject of measuring open-mindedness, no less.

There’s a questionnaire for measuring open-mindedness, and it’s used in research on beliefs in conspiracy theories, other reasoning traps, and more. Lately, some studies that used it came up with what Stanovich and Toplak called “startlingly high negative correlations with religiosity”. This was an artifact derailing the whole exercise, they found. And, they concluded, it was caused by a type of item in the questionnaire:

To our consternation, we realized that it was our research team that had introduced these items into the literature two decades ago, but we had heretofore never realized the potential for these items to skew correlations. In a new experiment, we demonstrate how BR items of this type disadvantage religious-minded subjects, and we show that it is possible to construct BR items with parallel content that are not so demographically biased. We also show that unbiased BR items do not sacrifice the predictive power that has previously been shown by AOT scales. We believe this lesson in item construction resulted from the lack of intellectual diversity in our own laboratory.

Kudos to Stanovich and Toplak for writing about this. Diversity among scientists is critical, this example reminds us. Working across disciplines should help, too, along with working closely with people affected by the problems scientists are trying to solve. The experience of researchers in rheumatology, after embracing consumer participation is a classic example of the practical implications this can have. An evaluation of that experience shows how it changed what was measured in that research – adding fatigue, sleep disturbance, and disease flares to the core research agenda – and how it changed research culture as well.

We’re all fish who, in some way or another, can’t see the water we’re swimming in, aren’t we? And we shouldn’t forget it.

~~~~

The cartoons are my own (CC BY-NC-ND license). (More cartoons at Statistically Funny and on Tumblr.)