A paper published this month got me frustrated, yet again, about this situation: There really is still too much uncertainty about conflict…

Biomedical Research: Believe It Or Not?

It’s not often that a research article barrels down the straight toward its one millionth view. Thousands of biomedical papers are published every day. Despite often ardent pleas by their authors to “Look at me! Look at me!,” most of those articles won’t get much notice.

It’s not often that a research article barrels down the straight toward its one millionth view. Thousands of biomedical papers are published every day. Despite often ardent pleas by their authors to “Look at me! Look at me!,” most of those articles won’t get much notice.

Attracting attention has never been a problem for this paper though. In 2005, John Ioannidis, now at Stanford, published a paper that’s still getting about as much as attention as when it was first published. It’s one of the best summaries of the dangers of looking at a study in isolation – and other pitfalls from bias, too.

But why so much interest? Well, the article argues that most published research findings are false. As you would expect, others have argued that Ioannidis’ published findings themselves are false.

You may not usually find debates about statistical methods all that gripping. But stick with this one if you’ve ever been frustrated by how often today’s exciting scientific news turns into tomorrow’s de-bunking story.

Ioannidis’ paper is based on statistical modeling. His calculations led him to estimate that more than 50% of published biomedical research findings with a p value of < 0.05 are likely to be false positives. We’ll come back to that, but first meet two pairs of numbers’ experts who have challenged this.

Round 1 in 2007: enter Steven Goodman and Sander Greenland, then at Johns Hopkins Department of Biostatistics and UCLA respectively. They challenged particular aspects of the original analysis. And they argued we can’t yet make a reliable global estimation of false positives in biomedical research. Ioannidis wrote a rebuttal in the comments section of the original article at PLOS Medicine.

Round 2 in 2013: next up are Leah Jager from the Department of Mathematics at the US Naval Academy and Jeffrey Leek from biostatistics at Johns Hopkins. They used a completely different method to look at the same question. Their conclusion: only 14% (give or take 1%) of p values in medical research are likely to be false positives, not most. Ioannidis responded. And so did other statistics heavyweights. [Update March 2017: Leek published another paper, with Leah R. Jager, again pitching for 14%.]

So how much is wrong? Most, 14% or do we just not know?

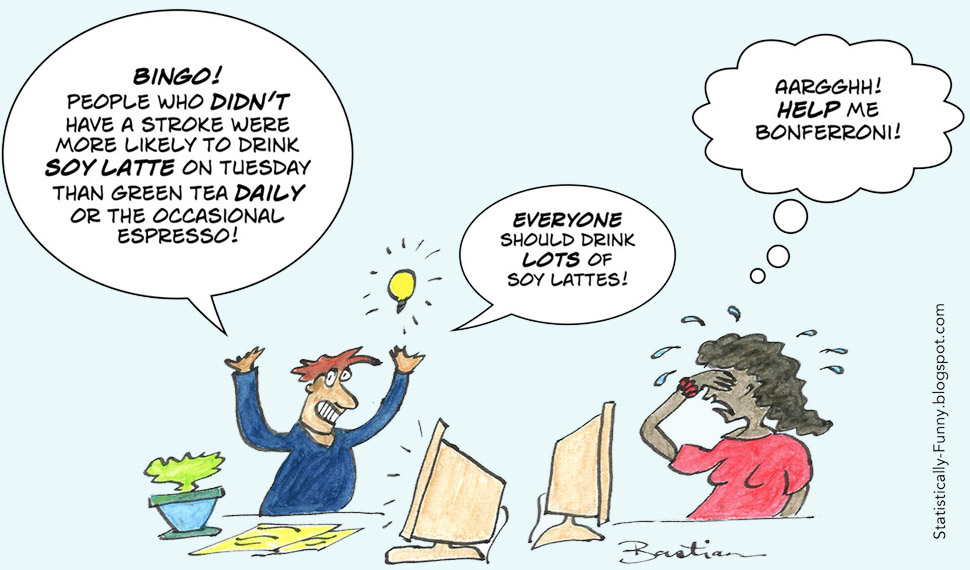

Let’s start with the p value, an oft-misunderstood concept which is integral to this debate of false positives in research. (See my previous post on its part in science downfalls.) The gleeful number-cruncher on the right has just stepped right into the false positive p value trap.

Decades ago, the statistician Carlo Bonferroni tackled the problem of trying to account for mounting false positive p values. Use the test once, and the chances of being wrong might be 1 in 20. But the more often you use that statistical test looking for a positive association between this, that and the other data you have, the more of the “discoveries” you think you’ve made are going to be wrong. And the amount of noise to signal will rise in bigger datasets, too. (There’s more about Bonferroni, the problems of multiple testing and false discovery rates at my other blog, Statistically Funny.)

In his paper, Ioannidis takes not just the influence of the statistics into account, but bias from study methods too. As he points out, “with increasing bias, the chances that a research finding is true diminish considerably.” Digging around for possible associations in a large dataset is less reliable than a large, well-designed clinical trial that tests the kind of hypotheses other study types generate, for example.

How he does this is the first area where he and Goodman/Greenland part ways. They argue the method Ioannidis used to account for bias in his model was so severe that it sent the number of assumed false positives soaring too high. They all agree on the problem of bias – just not on the way to quantify it. Goodman and Greenland also argue that the way many studies flatten p values to “< 0.05” instead of the exact value hobbles this analysis, and our ability to test the question Ioannidis is addressing.

Another area where they don’t see eye-to-eye is on the conclusion Ioannidis comes to on high profile areas of research. He argues that when lots of researchers are active in a field, the likelihood that any one study finding is wrong increases. Goodman and Greenland argue that the model doesn’t support that, but only that when there are more studies, the chance of false studies increases proportionately.

Jager and Leek used a completely different method to look at the question Ioannidis raised. They mined 5,322 p values from the abstracts of all the papers in 5 major journals across a decade. Then they used a false discovery rate (FDR) technique adapted from work done in genomic studies. They acknowledge that work is needed to see how applicable FDR is for non-genomic studies, but that their work still shows the real false finding rate must be a long way short of “most.”

Ioannidis is sticking to his guns. He points out that those 5 journals aren’t representative of the literature. For example, the proportion of the studies that were the least-biased types (randomized controlled trials and systematic reviews) was way over 10 times as high as in the general literature. And the p values in abstracts won’t show the whole story either.

Where does that leave us? Is the global rate of false statistical positives in research closer to 15% or 50% or more? I think Goodman and Greenland make the case that we still don’t know. Both of these studies, along with the low rates of successful research replication that Ioannidis also points to, suggest it’s disturbingly high. And there’s no doubt that in some types of research, the chances of being wrong are much higher than others. Ioannidis’ article is a good summary of the problems and many biases that make this so.

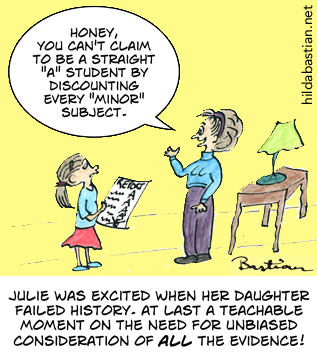

One of the main reasons that heavily biased research does damage is because of the biases at play when we all decide whether we believe a study finding or not. That’s our tendency to accept relatively uncritically those findings that we want to believe are true – while nit-picking the study findings that are confronting. The biggest bias we have to deal with is our own.

~~~~

More on this in my September posts, Bad research rising and Academic spin. See also “6 tips to protect yourself from data-led error” and “They would say that, wouldn’t they?“

The cartoons in this post are my originals from Statistically Funny posts on the dangers of looking at a study in isolation and multiple testing/false discovery rates.

The “most research findings are false” paper trail:

- Ioannidis’ original paper

- Goodman and Greenland respond at length and in the comments section

- Ioannidis responds to Goodman and Greenland

- Jager and Leek respond with a “false discovery rate” study

- Ioannidis responds

Disclosure: I’m an academic editor at PLOS Medicine, the open access medical journal that published Ioannidis’ paper.

* The thoughts Hilda Bastian expresses here at Absolutely Maybe are personal, and do not necessarily reflect the views of the National Institutes of Health or the U.S. Department of Health and Human Services.