Infographic vs Text: Evidence Throwdown!

A paper recently dropped a trio of randomized trials of an infographic going head-to-head with text. The reaction to the performance of the infographic was interesting. A lot of people clearly have strong beliefs about infographics!

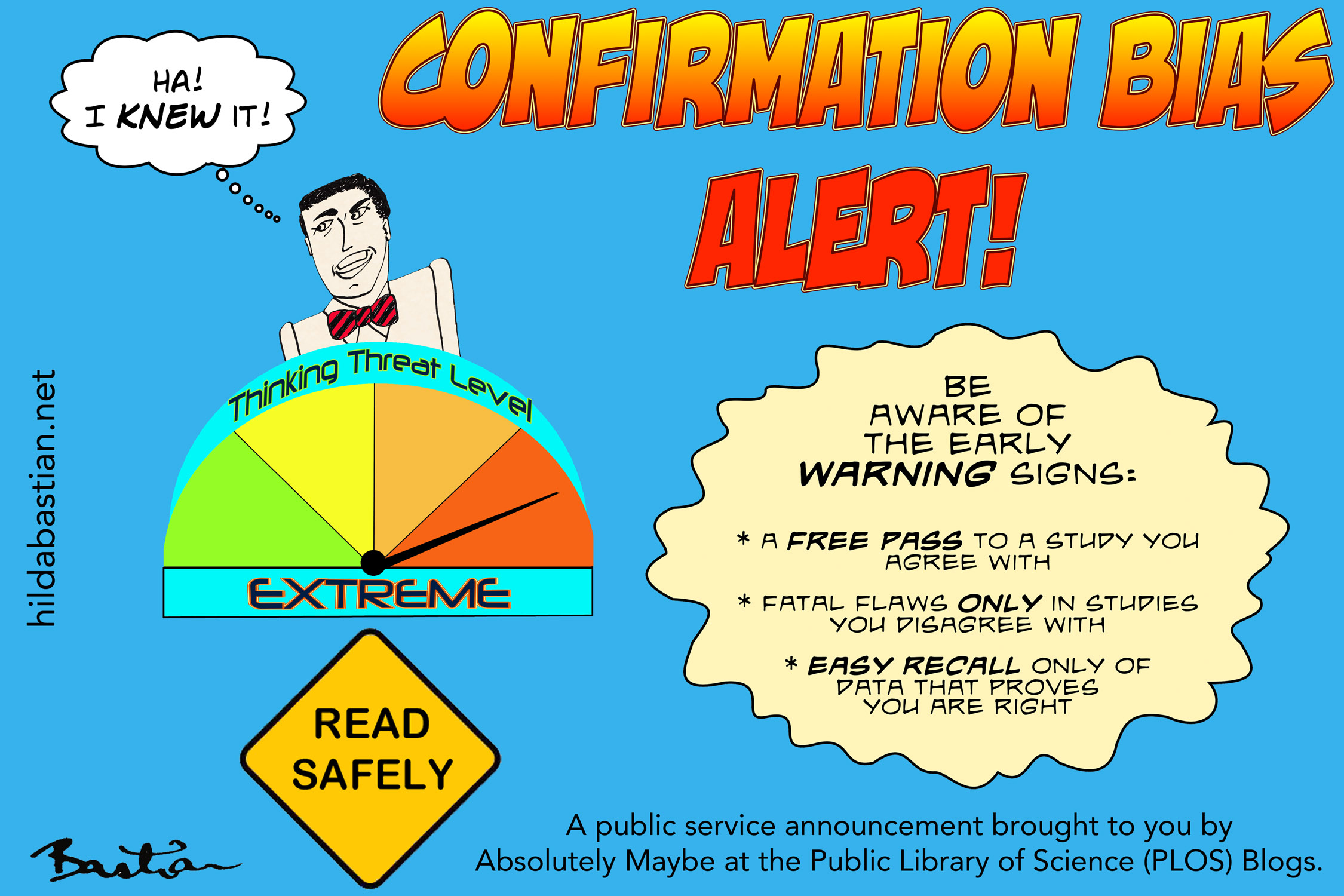

That strength of conviction seems in inverse proportion to the strength of evidence about using infographics for complex messages. For some people, it doesn’t matter: that infographics are eye-catching is enough.

There’s a lot to unpack here. It’s not even clear what people mean when they say “an infographic”. It could be anything from simple graphic visualization of data – a bar chart, or a type of map – through to a design-heavy, elaborate combination of elements.

It’s the more elaborate type we are looking at here. That means the complexity of the communication devices increases enormously – driving up the skills and money required to produce them, as well as the ways they can go wrong. Simultaneously, the evidence decreases.

Michael Rodin describes this elaborate kind of infographic as “a storytelling experiment”, between a short story and a graphic novel (or comic strip). Aurora Socca and Suzanne Suggs describe them as being “a bit like a still video” that shape a variety of elements into a “single unit of still communication”.

Each of the elements you could use to design one will have its own level of usefulness, affecting the usefulness of the overall combination created. With so many moving parts that are still a long way from being well-established conventions, it’s awfully tough to evaluate this as a genre, and in comparison to alternatives.

The new paper by Ivan Buljan and colleagues evaluate a single infographic versus a plain text summary and scientific abstract of a Cochrane systematic review. That review analyzes studies on turning a breech baby at term so that the baby is head down for birth.

There are 3 trials, one in a captive audience – a class of humanities students, and the other 2 in consumers and doctors. The students and doctors were randomized to infographic, plain text, or abstract. The consumers were randomized only to infographic or plain text.

There are several interesting design elements in this trio of trials, and it was great to see such a serious evaluation. The authors conclude that there was no difference in knowledge, based on a 10-question quiz that followed, but that people preferred the infographic. I disagree with that interpretation of the results.

The infographic and the plain text aren’t different formats of the same information. Although they both communicate results of the same systematic review, the content is extremely different. You can see for yourself, here for the infographic – and scroll down here to see the plain language summary.

For example, the plain text has virtually no numbers. Other than weeks of pregnancy and a date related to the research, there are only 2 numbers (that there were 8 studies with 1,308 women). The infographic has 16 additional numerical descriptions (including, for example, “14 per 1,000”). So it’s not only a style of presentation being compared, and that’s critical – especially given what happened next.

The students did not prefer the infographic over the text. They are the only group that really completed the study. The consumers and doctors weren’t in a supervised setting like the students. And they were given a numeracy test after reading the information: 113 out of 212 consumers (53%), and 34 out of 108 doctors (31%) dropped out. That makes the student trial the only one that’s reliable enough: the numeracy test could have disproportionately selected out people that wouldn’t have liked the number-heavy infographic.

The plain text version was also handicapped in the knowledge test: one of the questions was about the quality of the evidence in the systematic review. And the infographic had detailed information on this, while the plain text didn’t. (I have raised some additional issues in my comment on PubMed Commons.)

I agree with the authors’ conclusion that the evidence about infographics is “mixed”. The literature on this makes frustrating reading. There isn’t anywhere near enough evidence about individual design elements or combinations to help us know when it’s good to use what technique. The Cochrane infographic contains a good example: it uses a coffin as a pictograph for mortality. While that makes sense from one point of view, I think that’s a misfire in information intended for patients. We need a lot more work on conventions emerging in infographics. Conventions are much more clear in longer established genres, like cartooning.

It’s hard to find studies of infographics, though. However, I’m sure there are more than I was able to find. I only found slim pickings – with the better reported ones comparing to video, or having nowhere the rigor of the trials of the Cochrane infographic.

Papers by Jeffrey Griffin and Robert Stevenson give a glimpse of the studies that have been done with using infographics in news media (here and here). There’s a study of nutrition facts labelling, with one version having a small infographic element. And one on trying to change people’s political voting intentions (not much luck there, surprise, surprise!). There’s another on game-inspired infographic scorecards.

So, not much at all. But enough to know that infographics aren’t necessarily a slam dunk.

Some people responded to the trials of the Cochrane infographic with a version of “It doesn’t matter if people aren’t better informed, because infographics attract attention, so that more people read it”. There is some support for the eye-catching argument. For example, a study found little infographics called “visual abstracts” got more attention on Twitter.

There are a couple of problems with this position, though. As with anything else, the most important is the question of harm. So we need more evidence – and we need to care about what it shows. Images and graphs can mislead, or distract people from critical information that’s not in them. (I’ve talked about this a bit here.) In the long run, too, infographics winning extra attention might only work while it’s relatively uncommon and there’s novelty to it.

The idea of “infographic vs plain text” is a bit of a false categorization, though, isn’t it? Infographics also contain text. Our biggest issue is still that enough scientific information isn’t written to be widely understood and help people gain perspective on issues and numbers. No matter what we do with graphics, as Griffin and Stevenson point out:

Words remain important.

~~~~

[Update on 1 January 2018: I commented on this paper at PubMed Commons, and added a link.]

If you’re interested in the Cochrane infographic in the trials, its production and the software used are described in this blog post.

Disclosures: Some of the authors of the trial of a Cochrane infographic and plain language summary are friends and current or former colleagues. (I had no knowledge of the trial before its publication.) The Cochrane (text) plain language summaries were an initiative of mine in the early days of the Cochrane Collaboration. Although I wrote or edited a couple of thousand of the original Cochrane summaries, I had no involvement with the one studied here.

The cartoons are my own (CC BY-NC-ND license) – the comic “graphics” come from my 4-part comic, Pylori Story. (More cartoons at Statistically Funny and on Tumblr.)

The photo of New York’s Times Square at night is by Rafi B in Flickr, via Wikimedia Commons.

* The thoughts Hilda Bastian expresses here at Absolutely Maybe are personal, and do not necessarily reflect the views of the National Institutes of Health or the U.S. Department of Health and Human Services.